Short answer

This use case is for teams that want one shared link to behave differently depending on who or what opens it.

In Linkbreakers, you can place a workflow in front of the final destination, evaluate the device actor, and then send:

- likely human visitors to one destination

- likely agent traffic to another destination

- all other actors to a fallback route

This helps when links are being opened by AI assistants, automated fetchers, or preview systems before a real person ever arrives.

Why this use case matters

When links are shared in places like ChatGPT, Claude, Slack, or other AI-assisted environments, the traffic pattern changes.

A single shared URL may generate:

- a preview or retrieval request from an automated system

- an AI-agent fetch before a user sees the result

- a real human click after the link is recommended

If all of that traffic is treated the same way, your routing becomes messy and your analytics become less trustworthy.

What Linkbreakers adds to this use case

Linkbreakers gives you a routing layer between the shared link and the final destination.

That means you can:

- classify visits by actor type

- redirect agents to a dedicated destination

- keep human visitors on the normal experience

- collect different event data for each branch

- avoid mixing AI-originated fetches with real user sessions

For the broader workflow system, see what a workflow is in Linkbreakers.

The workflow pattern

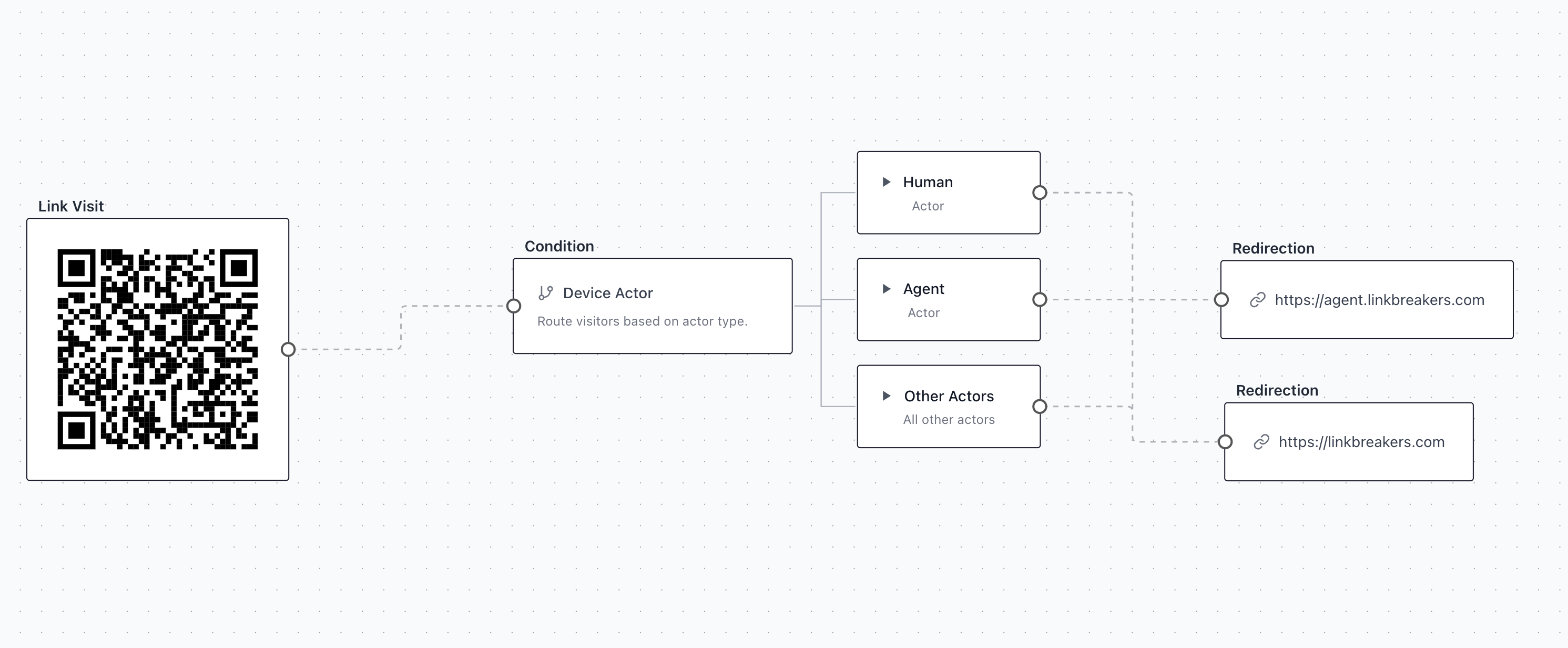

The workflow you shared follows a simple and useful pattern:

- A visitor opens a Linkbreakers URL or scans a QR code

- A Device Actor condition evaluates the request

- The workflow branches into Human, Agent, or Other Actors

- Each branch redirects to a different destination

In your example:

- Agent traffic is redirected to

https://agent.linkbreakers.com - Other Actors are redirected to

https://linkbreakers.com - Human can be routed to its own destination as needed

This is a strong setup when you want AI-related traffic isolated from your primary conversion page.

When this use case is the right fit

AI-search and AI-assistant discovery

Use this when your links are likely to be opened from AI assistants or AI-powered search products and you want to separate those visits from standard traffic.

Safer destination handling

Use it when the final destination should not treat automated fetches the same as real visitors.

Cleaner analytics

Use it when your team cares about attribution and wants to avoid counting likely agent requests as engaged human sessions.

Different experiences by actor type

Use it when the human experience should stay conversion-focused while agent or automated traffic should be sent to a lighter or more controlled destination.

Typical routing setups

Human to conversion page, agent to controlled endpoint

This is the most common pattern. Humans go to the normal landing page or workflow. Agents go to a separate destination that is easier to monitor or intentionally designed for automated access.

Human to product page, other actors to homepage

This is useful when you only want known human traffic to hit a deep page while all other traffic gets a safer fallback destination.

Human to form, agent to informational page

This is useful when you want to protect conversion forms from low-intent automated opens while still letting agents resolve a valid destination.

What data you can collect

This use case is not only about redirecting traffic. It is also about collecting cleaner context around how the link is being opened.

Teams commonly care about:

- actor classification

- timestamp

- source link or campaign

- destination branch reached

- visitor status

- device and browser context

- location and network context

For the broader analytics model, see what data you can collect from QR code scans, location, device, and behavior.

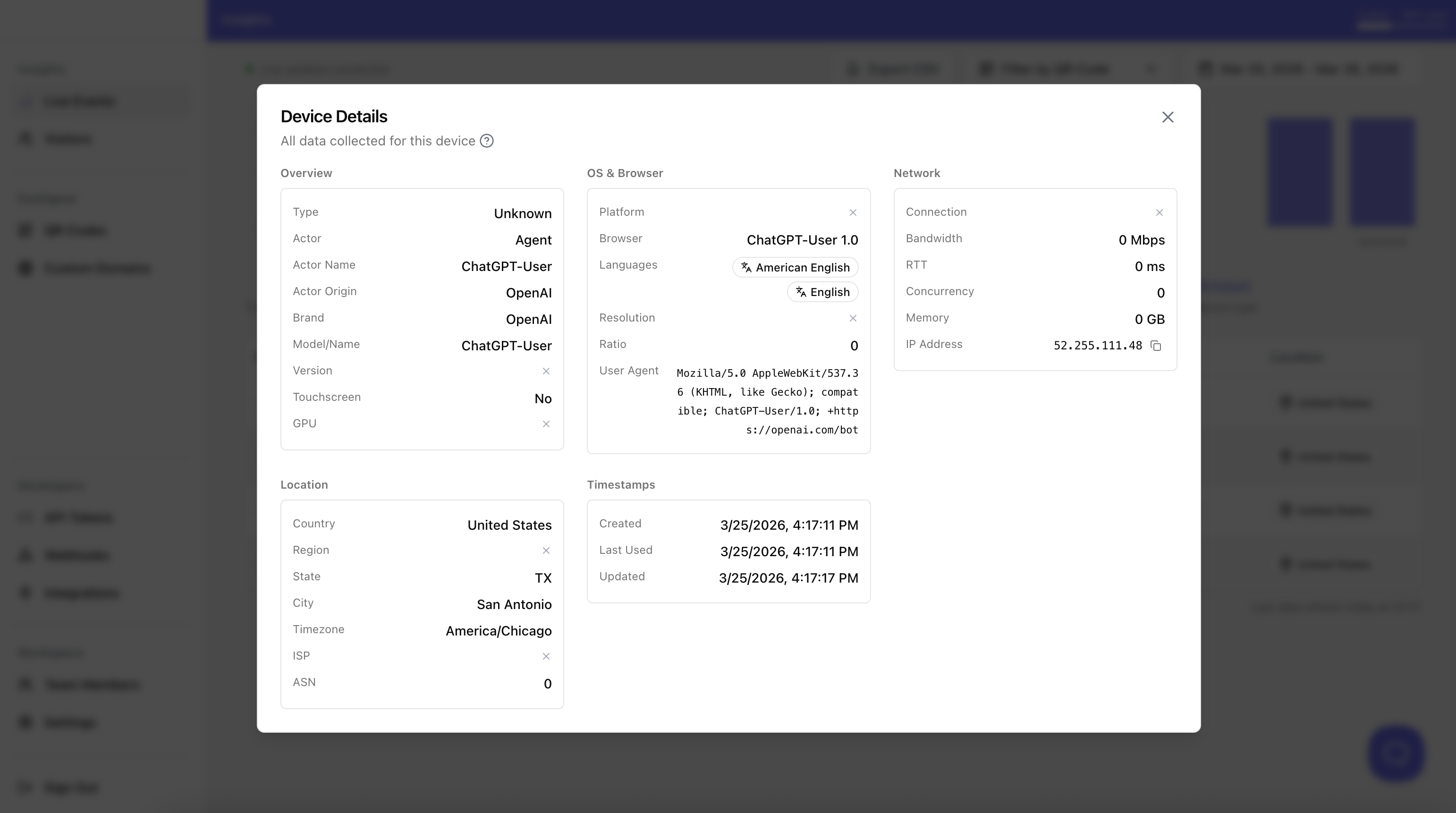

Example of detected agent data

In the example you shared, Linkbreakers identifies the visitor as an Agent and exposes useful fields about where that traffic came from.

The device details include signals such as:

- Actor:

Agent - Actor Name:

ChatGPT-User - Actor Origin:

OpenAI - Brand:

OpenAI - Model/Name:

ChatGPT-User - Browser:

ChatGPT-User 1.0 - User agent: an OpenAI bot user agent string

- Country / region / city: location context such as

United States,TX,San Antonio - IP and network fields: connection, RTT, concurrency, memory, ASN, and IP context when available

That is exactly what makes this use case practical. You are not only routing traffic differently, you are also preserving evidence of why the traffic was classified as agent traffic and what environment it came from.

How teams use that data

Once Linkbreakers detects likely agent traffic and records the actor details, teams usually do one or more of the following:

- route OpenAI or other agent traffic to a dedicated destination

- compare agent-originated visits against human visits in analytics

- verify which AI systems are actually hitting shared campaign URLs

- store the actor origin and device context for later analysis

- send the event into a webhook or downstream tool for alerting and reporting

This becomes especially useful when one campaign link is being discovered through both normal human sharing and AI-assisted recommendation flows.

Practical benefits

| Problem | Linkbreakers workflow approach |

|---|---|

| AI or automated traffic mixes with human traffic | Branch by device actor before redirecting |

| One destination receives the wrong type of visitor | Route humans and agents differently |

| Analytics overstate real engagement | Separate likely automated opens from human visits |

| Teams need flexible traffic handling | Update the workflow without changing the shared link |

Best practices for this use case

- Keep the human branch focused on the main action you want

- Route agent traffic to a destination you can monitor and change safely

- Use a clear fallback for other actors

- Name the workflow so your team understands what the routing logic does

- Review the resulting analytics regularly to confirm the branching is producing the expected traffic split

Example interpretation of the workflow screenshot

The workflow pattern you shared is a clean example of agent targeting:

- the link visit enters one workflow

- the Device Actor condition becomes the main decision point

- the Agent branch is isolated and redirected to a dedicated agent URL

- non-agent traffic stays on standard Linkbreakers destinations

That is exactly the kind of setup teams use when they want to experiment with AI-specific routing without creating separate public links for every traffic type.

Combined with the device details view, it also gives you a full loop:

- detect the actor

- route the actor

- store the actor metadata

- analyze where the traffic came from later

Why teams use Linkbreakers for this

The main advantage is that the shared URL stays the same while the routing logic stays flexible.

You do not need to replace the link every time your AI-handling strategy changes. You can keep the same entry point and update the workflow behind it.

That makes this a practical use case for:

- AI-aware traffic handling

- cleaner attribution

- redirect control

- controlled data collection

- future experimentation as AI-driven discovery keeps changing

Frequently asked questions

Can I send agents and humans to different destinations?

Yes. That is the core use case here. You can place a condition in the workflow and route different actor types to different redirects.

Why not just send everyone to the same page?

Because automated and human traffic can behave very differently. Separating them helps with analytics quality, destination safety, and more intentional user journeys.

Can I change the routing later without changing the shared link?

Yes. That is one of the main reasons to do this through Linkbreakers. The public link can stay stable while the internal workflow logic changes.

Is this only useful for QR codes?

No. It works for normal shared links too. QR codes are just one way to distribute the Linkbreakers URL.

About the Author

Laurent Schaffner

Founder & Engineer at Linkbreakers

Passionate about building tools that help businesses track and optimize their digital marketing efforts. Laurent founded Linkbreakers to make QR code analytics accessible and actionable for companies of all sizes.

Related Articles

What is QR code tracking and when should you use it?

Learn what QR code tracking means, what data you can measure, and when Linkbreakers is the right fit for campaigns, packaging, print, and offline conversion tracking.

How digital business cards work for teams

Understand how digital business cards work, why companies replace paper cards with smart shareable pages, and when Linkbreakers is the right fit for team-wide contact sharing and analytics.

What is a link in bio page and who is it for?

Learn what a link in bio page is, when it makes sense for creators and brands, and how Linkbreakers helps you turn one social profile link into a measurable conversion page.

On this page

Need more help?

Can't find what you're looking for? Get in touch with our support team.

Contact Support